parse (zif ) # Parse the input file as DOM (document object model, xml-tree) into memory if doctree. open (ifname, "r" ) # access as read-only ZipExtFile objectĭoctree = xml. Ofdelimiter = " " # any desired delimiter for export # Schema = # (as in DocumentList.xml)ĭefault_encoding = 'UTF-8' # (as in DocumentList.xml) Tagname = 'block-list:block' # (as in DocumentList.xml) Ofname = 'AutoCorrectEntries.csv' # any desired output file name for the export Ifname = 'DocumentList.xml' # Name of the file inside the ZIP archive that contains auto correct entries to reuse some of them with autokey) #ĪCEfile =r 'C: \Program Files \OpenOffice \User \LibreOffice 3 \user \autocorr \acor_it-IT.dat' # This is a ZIP where LibreOffice stores its auto correct entries '''ACEfile=r'C: \Program Files \OpenOffice \User \LibreOffice 3 \user \autocorr \acor_it-IT.dat' #for windows ''' minidom # Script to export LibreOffice Auto Correct Entries # into a flat file (e.g. # Create a function to download the files by passing the URL and filename list.#-*- coding: utf-8 -*- import os, sys, zipfile, xml. # Identify only the links which point to the JCMB 2015 files.įilenames <- links # Gather the html links present in the webpage. The filenames are passed as parameters in form of a R list object to this function. As we will be applying the same code again and again for multiple files, we will create a function to be called multiple times. Then we will use the function download.file() to save the files to the local system. We will use the function getHTMLLinks() to gather the URLs of the files. We will visit the URL weather data and download the CSV files using R for the year 2015. If they are not available in your R Environment, you can install them using following commands. The following packages are required for processing the URL’s and links to the files.

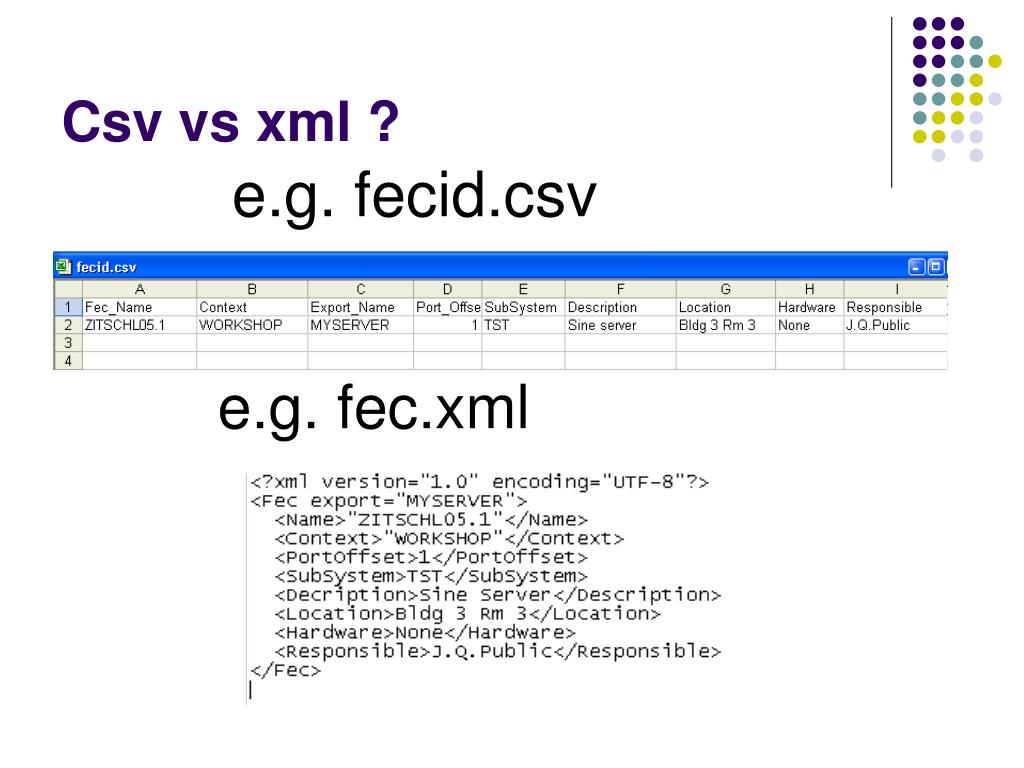

They are used to connect to the URL’s, identify required links for the files and download them to the local environment. Some packages in R which are used to scrap data form the web are − "RCurl",XML", and "stringr". Using R programs, we can programmatically extract specific data from such websites. For example the World Health Organization(WHO) provides reports on health and medical information in the form of CSV, txt and XML files. Many websites provide data for consumption by its users. The steps to process XML files through Flexter are input/output analysis (once) a) process and analyse the XSD schema b) calculate the target layout and generate source to target mappings process the XML data files (repeatable) We need some XSD schema files and some sample data now.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed